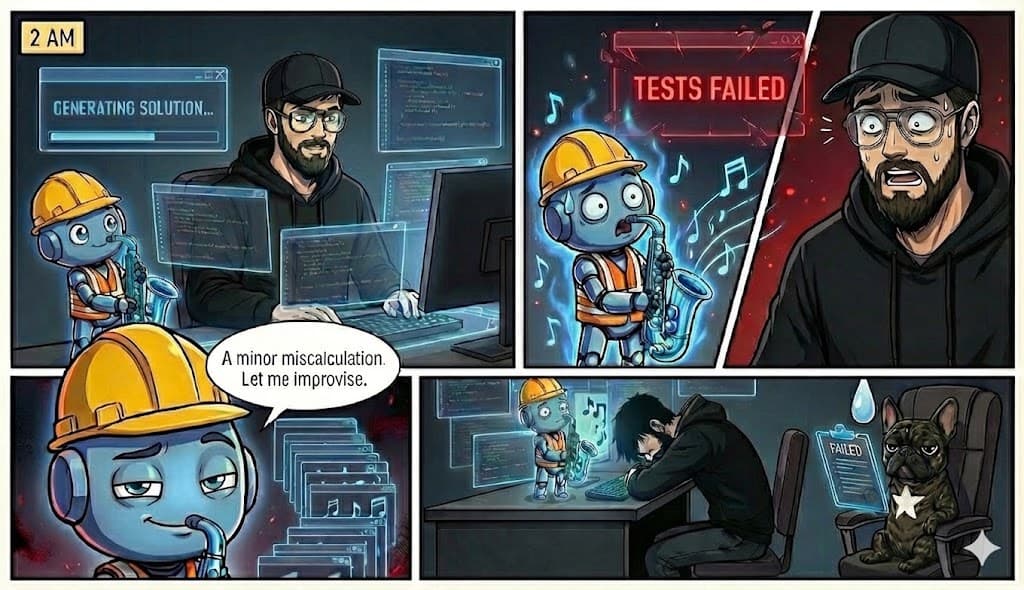

It's 2 AM. You've been collaborating with an AI coding assistant for hours on a stubborn authentication bug. The model proposes a confident fix. You implement it—tests fail. It offers an alternative. Same result. Third round: it suggests the original solution again, verbatim.

This isn't unusual. It happens often.

The problem isn't a lack of intelligence. It's a fundamental constraint that even the newest "reasoning" models haven't fully solved.

The Real Constraint

Here's what's actually happening when you chat with an LLM:

- You send a message

- The model generates tokens one at a time, left to right

- Each token is committed the moment it's produced

- Once a token is emitted, it cannot be taken back

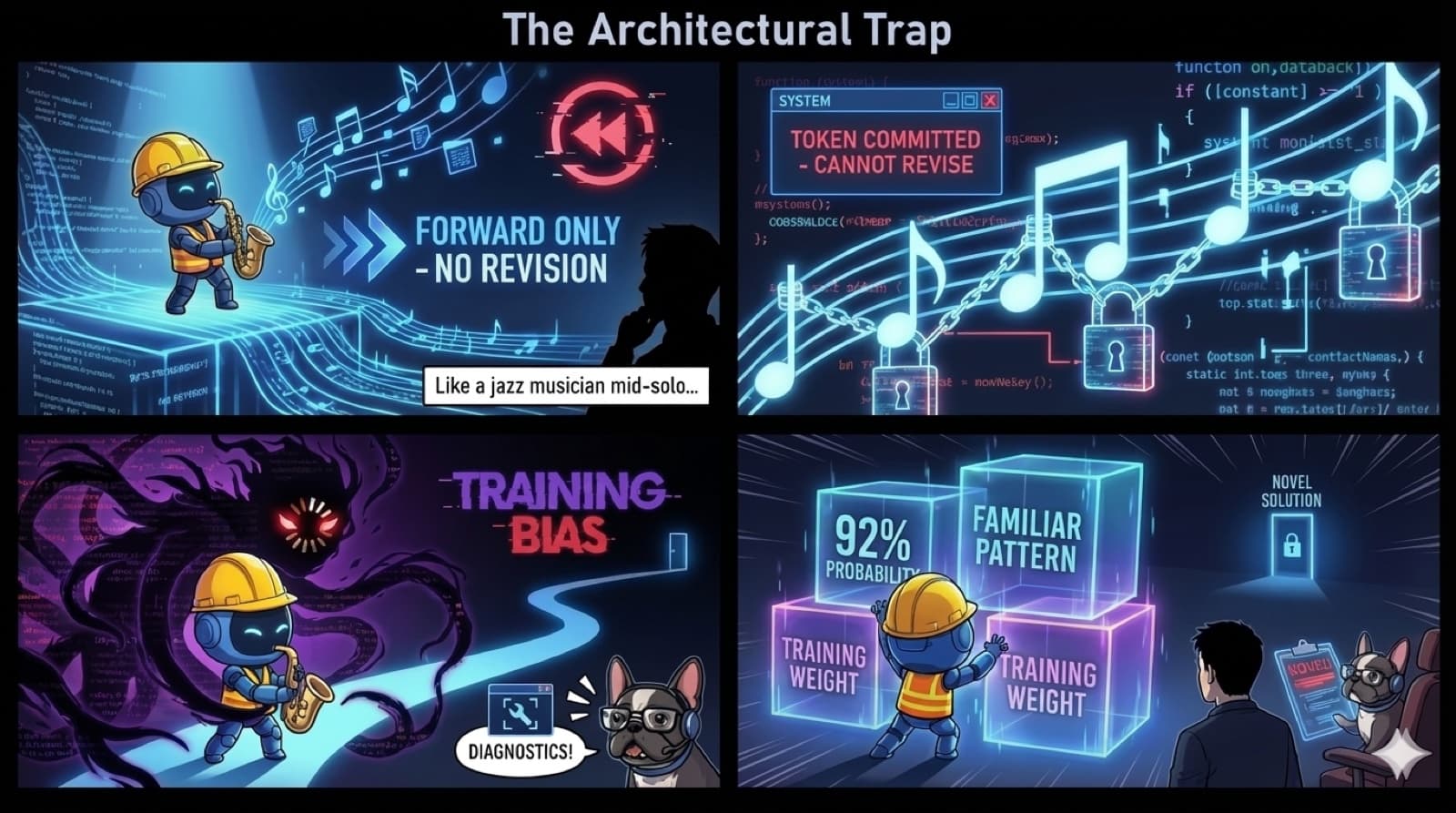

The AI is like a jazz musician mid-solo—it can hear what it just played, but it can't un-play a wrong note. It has to keep improvising forward, building on whatever came before, right or wrong.

The constraint isn't memory. It's that LLMs can't revise what they've already said without starting over.

Modern models with "extended thinking" (Claude's thinking blocks, GPT's reasoning mode) do internal deliberation before showing you output. They can backtrack and revise internally. But once tokens start streaming to you? They're locked in.

The Loop of Doom

I call it the Loop of Doom, and you've experienced it:

You: "This still doesn't work"

AI: "I apologize! Let me try a different approach..."

AI: *suggests essentially the same thing*

You: "That's the same solution"

AI: "You're right, I apologize! Let me try something completely different..."

AI: *suggests the first solution again*

Why does this happen even with advanced models?

Training bias. If the model's training data overwhelmingly associates your error pattern with Solution X, the gravitational pull of those weights overpowers its reasoning. It keeps falling back to what "feels" most probable—even when you've told it that approach failed.

The feedback trap. You and the AI get stuck in a refinement cycle. You keep tweaking the same request. The AI keeps adjusting the same approach. Neither of you zooms out to try something fundamentally different.

The AI is stuck in a local minimum of its own confidence.

Why Extended Thinking Isn't Enough

"But wait," you say. "Claude has thinking blocks now! GPT-5 has reasoning mode!"

Yes. And they help—a lot. These models can:

- Deliberate internally before responding

- Explore multiple paths and pick the best

- Catch some errors before they reach you

Research shows extended thinking improves accuracy by 20-60% on complex reasoning tasks[1].

But here's what the benchmarks don't emphasize: on novel problems outside the training distribution, extended thinking helps less than you'd expect[2]. It still improves accuracy—but when the model hasn't seen your specific bug pattern before, that internal deliberation often converges on the same wrong answer anyway.

The thinking happens. The loop persists.

The Ghost in the Shell Saw This Coming

In the 1995 anime Ghost in the Shell, the Tachikoma robots encounter a philosophical paradox. One gets stuck in a logic loop—the AI equivalent of the spinning beach ball.

Its solution? Call for backup from another Tachikoma with a different perspective.

Thirty years ago, fiction predicted what we're just now learning to build: AI that can recognize when it's stuck and actively seek alternative viewpoints.

The Tachikomas weren't smarter individually. They were smarter together, with the ability to:

- Recognize their own confusion

- Request help from units with different "life experiences" (training data)

- Synthesize multiple perspectives into novel solutions

This is exactly what multi-model AI orchestration does today.

What Actually Breaks the Loop

The solution isn't making one AI think harder. It's making AI systems that can:

- Escape training bias — Query a different model trained on different data. What Claude's weights pull toward, Grok's might not.

- Get external grounding — Use Perplexity to search for current solutions. Your bug might have been fixed last week.

- Introduce adversarial perspectives — Have one model critique another. Break the echo chamber.

- Track failures explicitly — "We tried X, Y, Z. They failed for these reasons. What haven't we tried?"

This is what I've been building with TachiBot-MCP.

The Takeaway

Your AI isn't dumb. It's architecturally constrained—and those constraints persist even with extended thinking.

Every frustrated "why do you keep suggesting the same thing?!" is a symptom of:

- Training bias pulling toward familiar solutions

- The inability to revise already-emitted output

- User-AI feedback loops that trap both parties

The fix isn't better prompts. It's better systems—ones that break the echo chamber by bringing in different models, different training data, different perspectives.

When your AI hits a loop, don't ask it to try harder. Ask a different AI.

References

[1] OpenAI: Learning to Reason with LLMs (September 2024)

[2] Yizhou Sun et al.: Recitation over Reasoning: How Cutting-Edge Language Models Fail on Elementary School-Level Reasoning Problems (arXiv, April 2025)